- LLMs power agentic projects. Last year we talked about “Tool using LLMs” before that “Chain of thought or Reasoning LLMs”, and the years before that we it was “Chatbots”. LLMs are sometimes superhumanly bright but when it comes to consistency and we look at thier performance over multiple interactions they frequently do very poorly. In the age of agents we call them poltergeists and genies. A genie should fulfill our wishes. A poltergeist is the agent’s other face that explains why we should do the coding, or why it shouldn’t follow the instructions. The first lesson of agentic coding is how to get the genie and not the hallucinating poltergeist

- Human in the loop - this is a project where the org/coder/etc are too scared to let the make decisions on thier behalf. A very sensible approach which does not scale. Not Production

- Agentic Production Projects - 80% of companies are doing agentic projects, but only 5% have brought them to production. Level. Every meetup discuses the 5%. Everyone is talking about thier production projects. But it is all buzz and no production.

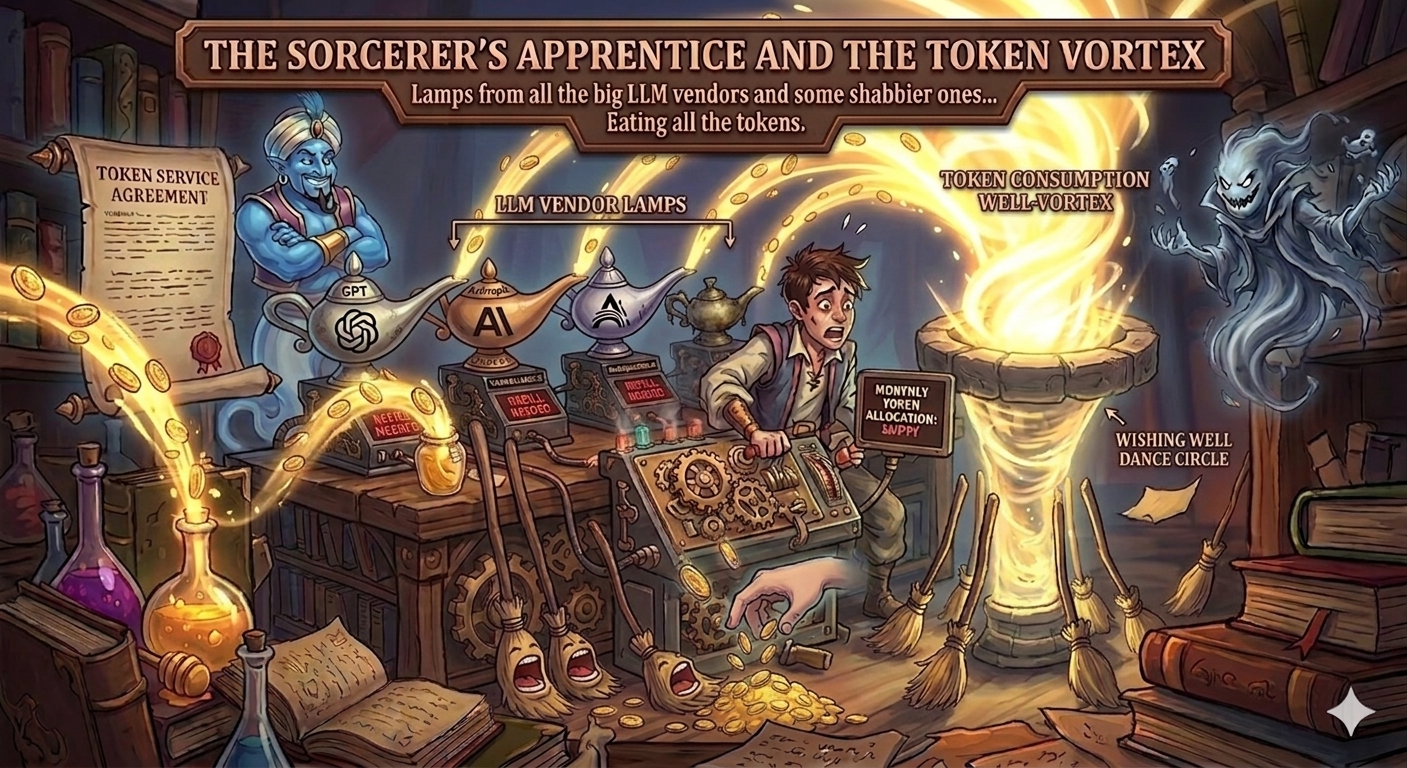

- Harness - this is a loop for keeping the Genie on the task and the poltergeists at bay. Some people call it the Genies Lamp. If we are folkish about the loop the agent can eat all out token budgets.

- Token - the currency of the realm. We have to pay for work by the token. And if you think tokens are just encoded words - there are tokens for pictures, videos, audio, and even code. [The lamps you get from the LLM vendors are setup to to stop after only a few tokens and ask for more instructions, so that eventually the sorcerer’s apprentice will put all the broomstick on autopilot only to see them eating all the tokens for the month.] Also lots of tokens fill the context window.

- Context Window - the amount of tokens that can be used in a single interaction with the LLM. This is a hard limit and it is important to keep track of how many tokens are being used in the prompt, the response, and any additional context that is being used. If you exceed the context window, you will get an error and your agent will not be able to complete its task.

- Guard rails (think GH actions to run before commits and or command line or tool invocation also after calling an LLM)

- Specs - organizing project interaction via markdown spec documents that can be shared with agents

- Checklists - agents love checklists and they are also a powerful paradigm for mission and workflow consitancy

- VS Code Custom agent - “The built-in agents provide general-purpose configurations for chat in VS Code. For a more tailored chat experience, you can create your own custom agents.” c.f. https://code.visualstudio.com/docs/copilot/customization/custom-agents

- VS Code Background agent - run with a local branch - should be checked wrt pull requests

- VS Code Cloud agent - think git hub actions - agents that can be run on GH. Can run longer slower - [Tasks should have high success probability.] [Q. how do we monitor progress/cost/outcomes] ? c.f. https://code.visualstudio.com/docs/copilot/agents/cloud-agents

- Vs Code Planning agent

- Copilot Coding agents cf https://docs.github.com/en/copilot/how-tos/use-copilot-agents/manage-agents

- agent skills - c.f. https://code.visualstudio.com/docs/copilot/customization/agent-skills

- MCP - and training for tool use!

- Procreate - python library used to verify json complies with spec

- Playwright - to automate the browser.

- LangSmith - to trace and monitor agent performance

- LangGraph - to visualize agent interactions and workflows

- LangChain - to build agent workflows

- Mixture of Experts and other voting schemes

- Custom workflows - this can mean a couple of thins

- originally a github action.

- an agent running in the cloud using a github action

- Perf Monitoring GPU and up - KV cache optimization

- tracing

- Hooks (e.g. commit + gen comments for rollback)

- Steering -

- instructions given to a (long running) agent that can be used to steer it back on track if it goes off the rails. This is a bit like the “guard rails” above but is more about course correction than prevention.

- Also steering can be used in chat to provide additional context after the initial prompt but before it completes its response.

- Detect Hallucinations - a big topic since there are apparently a number of reasons why hallucinations happen:

- Two abstractions

- define hallucination as a random answer that is not based on the prompt/history/training data

- Most Transformers are either explicitly state based models or equivalent to state based models. Hallucinations can be defined as entering a “defective” regime. The main issue being that once we enter the defective regime we are unlikely to get back to the “normal” regime.

- Probabilities are represented as log likelihoods and these numbers get smaller the longer the prompt/history. The smaller they are the more negative they become.

- The “happy path” in QA is when everything works fine. Say the response is 500 words and each word has a probability of .99. The log likelihood for the response is 500*log(.99) = -5. This is a massive log likelihood.

- The “unhappy path” might be when the best answer has small probabilities… 500log(.0001) = 500 -9.21 = -4605. If the answer is “very likely” i.e. all the words a probability of being generated in the response AKA as the “happy path” in QA, then the log likelihood will be tiny. If there are some contradictions in the prompt/history/memory the log likelihood can overflow and we can enter a “defective” regime where we are likely to get random answers.

- Poisoned tokens - we know that certain tokens can

jailbreakthe model and cause it to produce random answers. These are artefact of the tokenization and are likely to be highly unlikely to arise in normal testing of the model unless the “lookup table” is reversed engineered and the “poisoned tokens” are identified and included in the prompt. This is just one of many ideas used to jailbreak a model, and any of these can lead to hallucinations, so just fixing the tokenizer isn’t the fix for this class of hallucinations. - “May the odds be ever in your favor” FAIL - the probability for the right answer is lower than the other answers.

- small e.g. POS fail due to p(N|man) >> p(V|man) in “the old man the boat”

- big e.g. Bias against Robert Moses,

- personality drift c.f. paper YT video

- probabilistic contradictions - lets suppose that the LLM is smart enough that having a contradiction in the prompt/history results in a high chance of hallucination (random) answer. Unfortunately LLM represent probabilities as Log Likelihoods and these numbers get smaller the longer the prompt/history. If the answer is very likely for all words in the response AKA as the “happy path” in QA, then the log likelihood will be tiny. If there are some contradictions in the prompt/history/memory the log likelihood can underflow

- “out of distribution (the model never learned the answer)”

- “attention defect” - your answer requires the attention mechanism to attend to many locations that are too far apart to effectively return a signal. Hard to come up with examples. Attention is pretty amazing at learning relations. GRU and Transformers are pretty amazing at operating over a long context. However, all the stuff above can mess them up and even if one is on the happy path.

- Two abstractions

- [chat participant api] - a vs code extension that allows you to create a “chat participant” that can be used in the chat window. This is a bit like a custom agent but is more focused on providing a specific interface for interacting with the model in the chat window. For example, you could create a “chat participant” that provides a specific set of commands or functions that can be used in the chat window. This could be used to create a more interactive and engaging chat experience.

- Shadow AI - use of gen ai at work that is not monitored or controlled by the organization.

Citation

BibTeX citation:

@online{bochman2026,

author = {Bochman, Oren},

title = {Agentic {Buzz}},

date = {2026-01-03},

url = {https://orenbochman.github.io/posts/2026/2026-01-03-agentic-buzz/},

langid = {en}

}

For attribution, please cite this work as:

Bochman, Oren. 2026. “Agentic Buzz.” January 3. https://orenbochman.github.io/posts/2026/2026-01-03-agentic-buzz/.