---

title: "Lesson 2: The target Tracking application"

subtitle: "Appendix"

description: "This appendix explains the Kalman Filter, a mathematical method for estimating the state of a dynamic system from a series of noisy measurements."

categories:

- "Probability and Statistics"

keywords:

- "Kalman Filter"

- "state estimation"

- "linear algebra"

---

## The target Tracking application {#sec-kf-1.2.2-target-tracking}

::: {.callout-tip collapse="true"}

### Greek Notation

1. This section uses Greek letters to denote motion in the x and y direction.

2. The state vector is defined as $x(t) = [\xi(t), \eta(t), \dot{\xi}(t), \dot{\eta}(t)]^T$.

3. The reason is rather mundane - the instructor avoids circular definitions and any chance of confusion arising from reusing the same letter for different things. X is already taken for the state vector. So we use $\xi$ and $\eta$ for the x and y coordinates, respectively.

4. He also uses k for time index which I have replaced.

:::

- One application of KFs is for target tracking. [**target tracking problem**]{.column-margin}

- We wish to predict the future location of a dynamic system (the target) based on KF estimates and measurements.

- Ideally we know all the matrices and signals of its continuous-time linear state-space model

$$

\begin{aligned}

\dot{x}_t &= A x(t) + B u(t) + w(t) \\

z_t &= C x(t) + D u(t) + v(t)

\end{aligned}

$$ {#eq-target-tracking-equations}

- in the target tracking application, we typically don't know u(t)$ nor the state space matrices very well.

- we may need to approximate target dynamics based on some assumed behaviors.

- One idea is to come up with a hyper-prior that allows us to assign *intuitive* dynamics to the target. c.f. [@battagliaintuitive] work on intuitive physics

- If we roll our own we might use:

- a nearly constant position (NCP) model,

- a near constant velocity (NCV) model, or

- a coordinated-turn (CT) model.

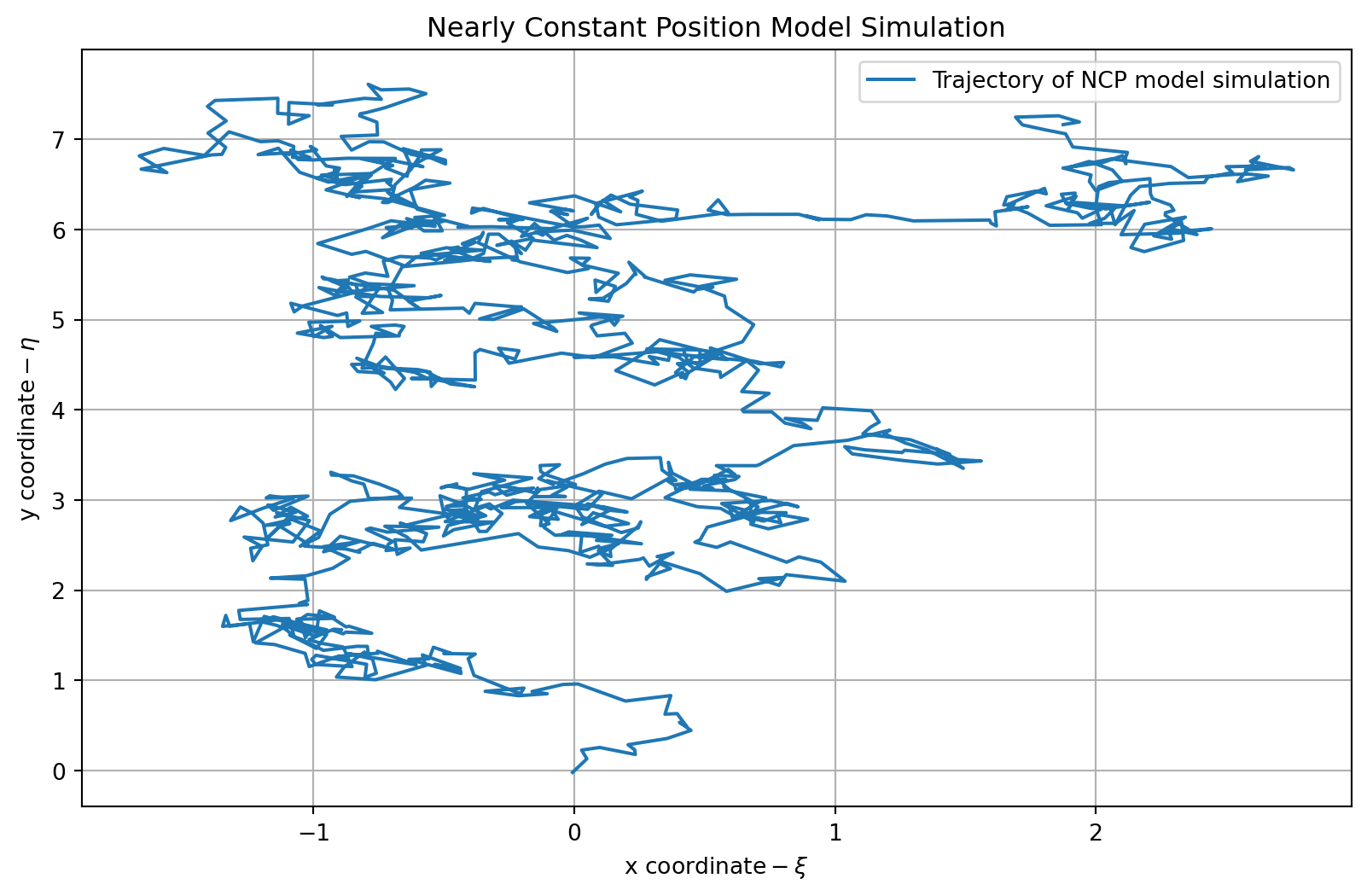

### The nearly constant position (NCP) model

In the NCP model, we assume that the target is stationary.

$$

x(t) = \begin{bmatrix} \xi(t) \\ \eta(t) \end{bmatrix}

$$ {#eq-ncp-model-state-vector}

- our models state equations is

$$

\dot{x}(t) = 0 x(t) + w(t)

$$ {#eq-ncp-model-state-equation}

- with $A= 0_{2\times 2}$ and $B=0_{2\times2}$

- our model's observation equation might be:

$$

z(t) = x(t) + v(t)

$$ {#eq-ncp-model-observation-equation}

- with $C= I_{2\times 2}$ and $D=0_{2\times2}$

```{python}

#| label: ncp-model

#| lst-label: lst-ncp-model

#| lst-cap: "NCP model simulation code"

import numpy as np

import matplotlib.pyplot as plt

maxT = 1000; # <1>

dT = 0.1; # <2>

# Define the NCP model

Ancp = np.eye(2)

Bwncp = np.eye(2)

Cncp = np.eye(2)

Dncp = np.zeros((2,2))

np.random.seed(42) # <3>

wncp = 0.1*np.random.randn(2,maxT)

vncp = 0.01*np.random.randn(2,maxT)

xncp = np.zeros(shape=(2,maxT)) # <4>

xncp[:,0] = [0,0] # <5>

for k in range(1,maxT): # <6>

xncp[:,k] = Ancp @ xncp[:,k-1] + Bwncp @ wncp[:,k-1]

zncp = Cncp @ xncp + vncp

```

1. maximum simulation time, used for all models

2. sample period used by all models (in seconds)

3. set seed for reproducibility,

4. storage

5. initial position

6. simulate the model

```{python}

#| label: fig-plot-ncp

#| fig-cap: "Plot of the NCP model simulation trajectory"

plt.figure(figsize=(10, 6))

plt.plot(zncp[0, :], zncp[1, :], label='Trajectory of NCP model simulation')

plt.title('Nearly Constant Position Model Simulation')

plt.xlabel(r'$\text{x coordinate} - \xi$')

plt.ylabel(r'$\text{y coordinate} - \eta$')

plt.legend()

plt.grid()

plt.show()

```

```{python}

#| label: lst-ncp-model-final-coordinate

print(f"Final coordinate of the trajectory: {zncp[:, -1]}") # <1>

```

1. Display the final coordinate of the trajectory

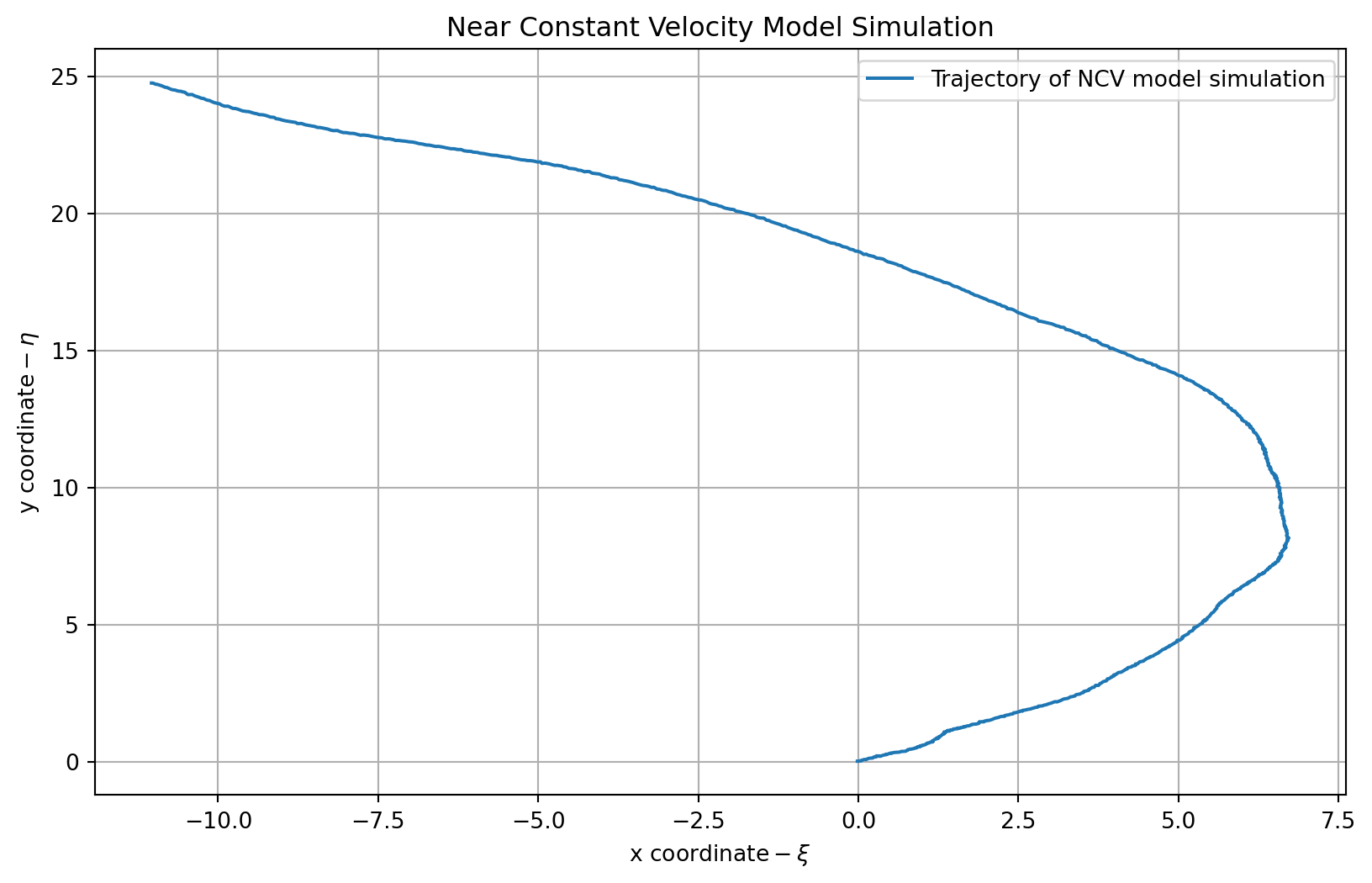

### The nearly constant velocity (NCV) model

- We now extend our target tracking model from static object to one having momentum: its velocity is nearly constant, but gets perturbed by external forces.

- The state vector is now:

$$

x(t) = \begin{bmatrix}

\xi(t) \\

\dot{\xi}(t) \\

\eta(t) \\

\dot{\eta}(t)

\end{bmatrix}

$$ {#eq-ncv-model-state-vector}

- the state equations are:

$$

\dot{x}(t) =

\underbrace{

\begin{bmatrix}

0 & 1 & 0 & 0 \\

0 & 0 & 0 & 0 \\

0 & 0 & 0 & 1 \\

0 & 0 & 0 & 0

\end{bmatrix} x(t) }_{A} +

\underbrace{

\begin{bmatrix}

0 & 0\\ 1&0 \\ 0&0 \\ 0&1

\end{bmatrix} x(t) +

\underbrace{\tilde{\omega}(t)}_{\in \mathbb{R}_{2\times 1}}

}_{\omega(t)}

$$ {#eq-ncv-model-state-equation}

- the observation equation might be:

$$

z(t) =

\underbrace{

\begin{bmatrix}

1 & 0 & 0 & 0 \\

0 & 0 & 1 & 0

\end{bmatrix}}_{C} x(t) + \nu(t)

$$ {#eq-ncv-model-observation-equation}

```{python}

#| label: ncv-model

#| lst-label: lst-ncv-model

#| lst-cap: "NCV model simulation code"

# Define the NCV model

Ancv = np.array([[1, dT, 0, 0],

[0, 1, 0, 0],

[0, 0, 1, dT],

[0, 0, 0, 1]])

Bwncv = np.array([[dT**2/2, 0],

[dT, 0],

[0, dT**2/2],

[0, dT]])

Cncv = np.array([[1, 0, 0, 0],

[0, 0, 1, 0]])

Dncv = np.zeros((2, 2))

np.random.seed(0) # Don't change this line

wncv = 0.1*np.random.randn(2,maxT)

vncv = 0.01*np.random.randn(2,maxT)

xncv = np.zeros((4,maxT)) # storage

xncv[:,0] = [0,0.1,0,0.1] # initial position, velocity

#xncp = np.zeros(shape=(2,maxT)) # storage

#xncp[:,0] = [0,0] # initial posn.

for k in range(1,maxT): # simulate model

xncv[:,k] = Ancv @ xncv[:,k-1] + Bwncv @ wncv[:,k-1]

zncv = Cncv @ xncv + vncv

```

```{python}

#| label: fig-plot-ncv

#| fig-cap: "Plot of the NCV model simulation trajectory"

# Plot the results

plt.figure(figsize=(10, 6))

plt.plot(zncv[0, :], zncv[1, :], label='Trajectory of NCV model simulation')

plt.title('Near Constant Velocity Model Simulation')

plt.xlabel(r'$\text{x coordinate} - \xi$')

plt.ylabel(r'$\text{y coordinate} - \eta$')

plt.legend()

plt.grid()

plt.show()

```

```{python}

#| label: lst-ncv-model-final-coordinate

print(f"Final coordinate of the trajectory: {zncv[:, -1]}") # <1>

```

1. Display the final coordinate of the trajectory

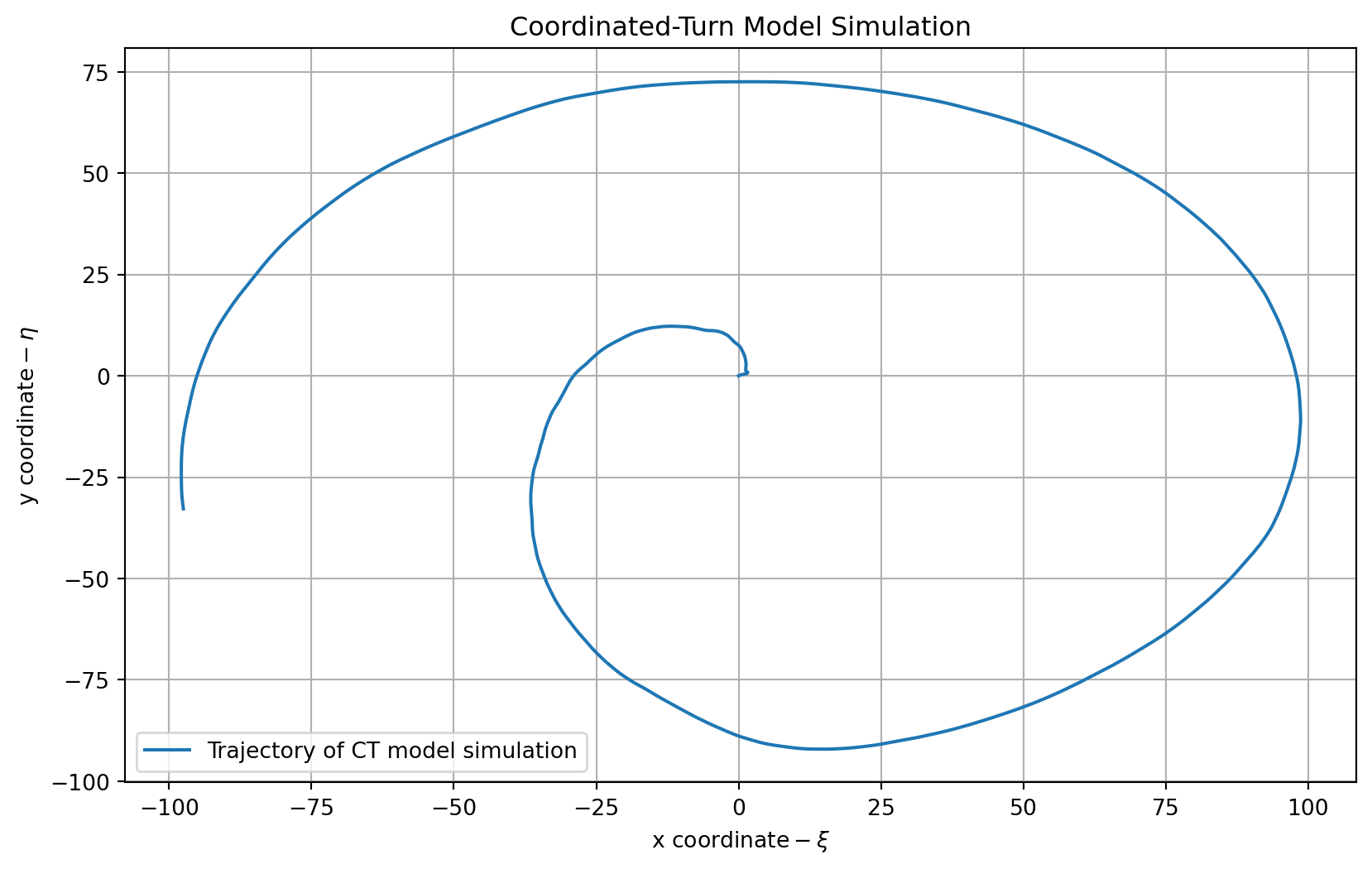

### The coordinated-turn model

We now extend our target tracking model from one having momentum to one moving a **constant angular velocity** $\Omega$

$$

\ddot{\xi}(t) = -\Omega\dot{\eta}(t) \qquad \ddot{\eta}(t) = \Omega\dot{\xi}(t)

$$ {#eq-ct-model-acceleration-equations}

- The state vector is now:

$$

x(t) = \begin{bmatrix}

\xi(t) \\

\dot{\xi}(t) \\

\eta(t) \\

\dot{\eta}(t)

\end{bmatrix}

$$ {#eq-ct-model-state-vector}

- the state equations are:

$$

\dot{x}(t) =

\underbrace{

\begin{bmatrix}

0 & 1 & 0 & 0 \\

0 & 0 & 0 & -\Omega \\

0 & 0 & 0 & 1 \\

0 & \Omega & 0 & 1

\end{bmatrix} x(t) }_{A} +

\underbrace{

\begin{bmatrix}

0 & 0\\ 1&0 \\ 0&0 \\ 0&1

\end{bmatrix} x(t) +

\underbrace{\tilde{\omega}(t)}_{\in \mathbb{R}_{2\times 1}}

}_{\omega(t)}

$$ {#eq-ct-model-state-equation}

- the observation equation might be:

$$

z(t) =

\underbrace{

\begin{bmatrix}

1 & 0 & 0 & 0 \\

0 & 0 & 1 & 0

\end{bmatrix}}_{C} x(t) + \nu(t)

$$ {#eq-ct-model-observation-equation}

```{python}

# Define the coordinated-turn model

import numpy as np

from scipy.signal import StateSpace, lsim

# Parameters

W = 0.01

A = np.array([

[0, 1, 0, 0],

[0, 0, 0, -W],

[0, 0, 0, 1],

[0, W, 0, 0]

])

Bw = np.array([

[0, 0],

[1, 0],

[0, 0],

[0, 1]

])

C = np.array([

[1, 0, 0, 0],

[0, 0, 1, 0]

])

D = np.zeros((2, 2))

# Create CT state-space model

ct = StateSpace(A, Bw, C, D)

# Time vector and noise

np.random.seed(0) # Match Octave's `randn("state", 0)`

tct = np.arange(1000) * 1.0

wct = 0.01 * np.random.randn(1000, 2) # shape (n_samples, n_inputs)

vct = 0.001 * np.random.randn(1000, 2)

# Simulate system

_, yout, _ = lsim(ct, U=wct, T=tct)

# Add sensor noise

zct = yout + vct

```

```{python}

# Plot the results

plt.figure(figsize=(10, 6))

plt.plot(zct[:, 0], zct[:, 1], label='Trajectory of CT model simulation')

plt.title('Coordinated-Turn Model Simulation')

plt.xlabel(r'$\text{x coordinate} - \xi$')

plt.ylabel(r'$\text{y coordinate} - \eta$')

plt.legend()

plt.grid()

plt.show()

```

```{python}

# Display the final coordinate of the trajectory

print(f"Final coordinate of the trajectory: {zct[-1, :]}")

```

::: {.callout-tip collapse="true"}

### Overthinking the CT model

since

question why not $\ddot{\eta}(t) = \dot{\xi}(t) = 0$?

so am I correct that we should have the following state vector?

$$

x(t) = \begin{bmatrix}

\xi(t) \\

\dot{\xi}(t) \\

\eta(t) \\

\dot{\eta}(t) \\

\ddot{\xi}(t) \\

\ddot{\eta}(t)

\end{bmatrix}

$$ {#eq-ct-model-state-vector-extended}

and the state equations are:

$$

\dot{x}(t) =

\underbrace{

\begin{bmatrix}

0 & 1 & 0 & 0 & 0 & 0 \\

0 & 0 & 0 & 0 & 1 & -\Omega \\

0 & 0 & 0 & 0 & 0 & 0 \\

0 & 0 & 0 & 0 & 0 & 0 \\

0 & 0 & \Omega & 0 & 0 & 0 \\

0 & 0 & 0 & 0 & 0 & 0

\end{bmatrix} x(t) }_{A} +

\underbrace{

\begin{bmatrix}

0 & 0 & 0 & 0\\

1 & 0 & 0 & 0 \\

0 & 0 & 0 & 0 \\

0 & 1 & 0 & 0 \\

0 & 0 & 0 & 0 \\

0 & 0 & 0 & 0

\end{bmatrix} \tilde{\omega}(t)}_{\tilde{\omega}(t)}

$$ {#eq-ct-model-state-equation-extended}

So while this took a while to formulate, it turns out that the CT model is fine. There were a number of factors contributing to my confusion.

1. The fact that this is a constant angular velocity model, so the acceleration is not zero.

- what changes is the direction and not size of the velocity vector.

- I initially didn't notice that the angular velocity is constant by definition.

2. I recalled that we need to split the velocity into two orthogonal components over time

3. The frame of reference is not explained explicitly.

- If we are in the object's frame of reference, then the angular velocity components are constant.

- If we are in the world's frame of reference, then everything is more complicated and the angular velocity components are changing sinusoidally.

4. There are a bunch of apparent forces/motions involved in circular motion on a spherical surface that surfaced in my mind.... Before I was able to make things more precise and clear.

5. How first order state representation vectors work, this is what convinced me the formulation is indeed correct and that my idea is most likely to be wrong!

- the idea is simple in @eq-ct-model-state-equation we have the first derivative of the state vector $x(t)$, which is a vector of position and velocity. The acceleration @eq-ct-model-acceleration-equations have been incorporated into the state vector.

- We can recover them by taking the second derivative of the state vector!

:::

## Understanding the time-domain response of a state-space model {#sec-kf-1.2.3-time-domain-response}

### Intro to continuous-time state-space models

- You have seen that simulation is a valuable tool for understanding state-space models.

- But, we would like to develop more insight into the system response by more general analytic means; specifically, by looking at the time-domain solution for $x(t)$.

- We solve first for the homogeneous solution $(u(t) = 0)$:

- Start with $\dot x(t) = A x(t)$ and some initial state $x(0)$.

- Take Laplace transform:

$$

\begin{aligned}

sX(s) - x(0) &= AX(s) && \text{Initial value theorem}

\\ (sI - A)X(s) &= x(0) && \text{take care with matrix dimensions!}

\\ X(s) &= (sI - A)^{-1}x(0) && \text{Assume invertible}

\end{aligned}

$$

Detailed Laplace-transform knowledge will be helpful

- So far we have:

$$

x(t) = \mathcal{L}^{-1}[(sI - A)^{-1}]x(0)

$$ {#eq-ct-model-homogeneous-solution}

### The state-transition matrix

- Recapping, we have: @eq-ct-model-homogeneous-solution. But,

$$

(sI - A)^{-1} = \frac{I}{s}+\frac{A}{s^2}+\frac{A^2}{s^3}+\frac{A^3}{s^4}+\cdots \quad\text{(geometric series)}

$$

so,

$$

\mathcal{L}^{-1}[sI - A]^{-1} = I + At + \frac{1}{2!}A^2t^2 + \frac{1}{3!}A^3t^3 + \cdots \stackrel{\triangle}{=} e^{At} \quad\text{(matrix exponential)}

$$

- Therefore, we write $x(t) =e^{At} x(0)$

- $e^{At}$ is called the “transition matrix” or “state-transition matrix.”

- Note that $e^{At}$ is not the same as taking the exponential of every element of $At$.

- $e^{(A+B)t}=e^{At}e^{Bt}$ iff $AB=BA$: (that is, not in general).

- In Octave, can compute $x(t)$ as: `x = expm(A*t)*x0;`

- Will say more about $e^{At}$ when we discuss the structure of $A$.

### Computing the state-transition matrix by hand

- Computing $e^{At} = \mathcal{L}^{-1}[sI - A]^{-1}$ is straightforward for $2 \times 2$.

- EXAMPLE: Find $e^{At}$ when $A = \begin{bmatrix} 0 & 1 \\ 2 & 3 \end{bmatrix}$.

- Solve:

$$

\begin{aligned}

(sI - A)^{-1} &= \begin{bmatrix} s & -1 \\ 2 & s+3 \end{bmatrix}^{-1} \\

&= \frac{1}{s^2 + 3s + 2} \begin{bmatrix} s+3 & 1 \\ -2 & s \end{bmatrix} \\

&= \begin{bmatrix}

\frac{ 2}{s+1} - \frac{1}{s+2} & \frac{ 1}{s+1} - \frac{1}{s+2} \\

\frac{-2}{s+1} + \frac{2}{s+2} & \frac{-1}{s+1} + \frac{2}{s+2}

\end{bmatrix} \\

e^{At} &= \begin{bmatrix}

2e^{-t} - e^{-2t} & e^{-t} - e^{-2t} \\

-2e^{-t} + 2e^{-2t} & - e^{-t} + 2e^{-2t}

\end{bmatrix} \mathbb{1}(t)

\end{aligned}

$$

- This is probably the best way to find $e^{At}$ if the $A$ matrix is $2 \times 2$ .

### Forced state response

- Solving for the forced solution $(u(t) \ne 0)$, we find:

$$

x(t) = e^{At} x(0) + \underbrace{\int_0^t e^{A(t-\tau)} B u(\tau) d\tau}_{\text{convolution}}

$$

- Where did this come from?

1. ${\color{blue} \dot{x}(t) - A x(t)} = {\color{yellow}B u(t)}$, simply by rearranging the state equation.

2. Multiply both sides by $e^{At}$ and rewrite the LHS as the middle expression:

$$

e^{-At} {\color{blue}[\dot x(t) - A x(t)]} = {\color{red}\frac{d}{dt} (e^{-At} x(t))} = e^{-At} {\color{yellow}B u(t)} d\tau

$$

3. Integrate the middle and the RHS; rearrange and keep new middle and RHS:

$$

\begin{aligned}

\int_0^t {\color{red}\frac{d}{d\tau} [ e^{-A(t-\tau)} x(\tau)]} d\tau &= e^{-At}x(t)-x(0) \\

&= \int_0^t e^{-A(t-\tau)} {\color{yellow} B u(\tau)} d\tau

\end{aligned}

$$

### Forced output response

- So, we have established the following state dynamics:

$$

x(t) = e^{At} x(0) + \underbrace{\int_0^t e^{A(t-\tau)}{\color{yellow}B u(\tau)} d\tau}_{\text{convolution}}

$$

- Since $z(t) = C x(t) + Du(t)$, the system output is then comprised of three parts:

$$

z(t) =

\underbrace{\vphantom{\int_0^t} C e^{At} x(0)}_{\text{initial resp}}+

\underbrace{\int_0^t Ce^{A(t-\tau)}{\color{yellow}Bu(\tau)} d\tau}_{\text{convolution}} +

\underbrace{\vphantom{\int_0^t} Du(t)}_{\text{feed through}}

$$

### Summary

- [You have learned how to compute the **homogeneous** and **forced** response of a state-space model.]{.mark}

- The output $z(t)$ comprises of:

- [a response due to **initial conditions** :]{.mark} $C e^{At} x(0)$

- [a dynamic response due to **forcing input** :]{.mark} $\int_0^t Ce^{A(t-\tau)}Bu(\tau) d\tau$

- [an instantaneous response due to the **feed-through** :]{.mark} $Du(t)$

- These terms rely on the matrix exponential $e^{At}$.

- **Caution** $e^{At}$ is not the term by term exponential operation on elements of $At$.

- Don't compute it as: ~~`exp(A*t)`~~;

- One way to compute it (e.g., by hand) is via: $e^{At} = \mathcal{L}^{-1}[sI - A]^{-1}$.

- Or, in Octave, it is correct to compute: `expm(A*t)`;

- We will dive deeper into the matrix exponential in [@sec-kf-1.2.4-time-domain-response]

## Illustrating the time-domain response {#sec-kf-1.2.4-time-domain-response}

### Deconstructing the matrix exponential

- Have seen the key role of $e^{At}$ in the solution for $x(t)$.

- Impacts the system response, but need more insight.

- Consider what happens if the matrix A is diagonalizable, that is, there exists a

matrix $T$ such that $T^{-1}AT = \Delta$ is diagonal. Then,

$$

\begin{aligned}

e^{At} &= I + At + \frac{1}{2!}A^2t^2 + \frac{1}{3!}A^3t^3 + \cdots

\\ &= I + T \Delta T^{-1}t + \frac{1}{2!}T \Delta^2 T^{-1}t^2 + \frac{1}{3!}T \Delta^3 T^{-1}t^3 + \cdots

\\ &= T \left( I + \Delta t + \frac{1}{2!} \Delta^2 t^2 + \frac{1}{3!} \Delta^3 t^3 + \cdots \right) T^{-1}

\\ &= T e^{\Delta t} T^{-1},

\end{aligned}

$$

and

$$

e^{\Delta t} = \text{diag} \left( e^{\lambda_1 t}, e^{\lambda_2 t}, \ldots, e^{\lambda_n t} \right)

$$

### How to find the transformation matrix

- Much simpler form for the exponential, but how to find $T, \Delta$ ?

- Write $T^{-1}AT = \Delta$ as $T^{-1}A = \Delta T^{-1}$ with

$$

T^{-1} = \begin{bmatrix}

w_1^T \\

w_2^T \\

\vdots \\

w_n^T

\end{bmatrix}

\qquad w^T_j \text{ are the rows of } T^{-1}

$$

- We have: $w^T_i A = \lambda_i w^T_i$; so $w_i$ is a left eigenvector of $A$.

- Can also write $T^{-1}AT = \Delta$ as $AT = \Delta T$ with

$$

T = \begin{bmatrix} v_1 & v_2 & \cdots & v_n \end{bmatrix}

$$

i.e $v_j$ are the columns of $T$.

- We have $Av_i = \lambda_i v_i$, so $v_i$ is a right eigenvector of $A$. Also, since $T^{-1}T = I$, we have that $w^T_i v_j = \delta_{i,j}$

### How the deconstruction simplifies things

So $T$ comprises the eigenvectors of $A$. How does this help?

$$

e^{At} = Te^{\Delta t} T^{-1}

= \begin{bmatrix} v_1, v_2, \ldots, v_n \end{bmatrix}

\begin{bmatrix}

e^{\lambda_1 t} & 0 & \cdots & 0 \\

0 & e^{\lambda_2 t} & \cdots & 0 \\

\vdots & \vdots & \ddots & \vdots \\

0 & 0 & \cdots & e^{\lambda_n t}

\end{bmatrix}

\begin{bmatrix} w_1^T \\ w_2^T \\ \vdots \\ w_n^T \end{bmatrix}

= \sum _{}^n e^{\lambda_i t} v_i w_i^T

$$

- Very simple form, which can be used to develop intuition about dynamic response:

$$

x(t) = e^{At} x(0) =T e^{\Delta t} T^{-1} x(0) = \sum _{}^n e^{\lambda_i t} v_i w_i^T x(0).

$$

- Notice that the only time-varying components are $e^{\lambda_i t}$ , which are then multiplied by constant matrices so that the states are linear combinations of $e^{\lambda_i t}$ .

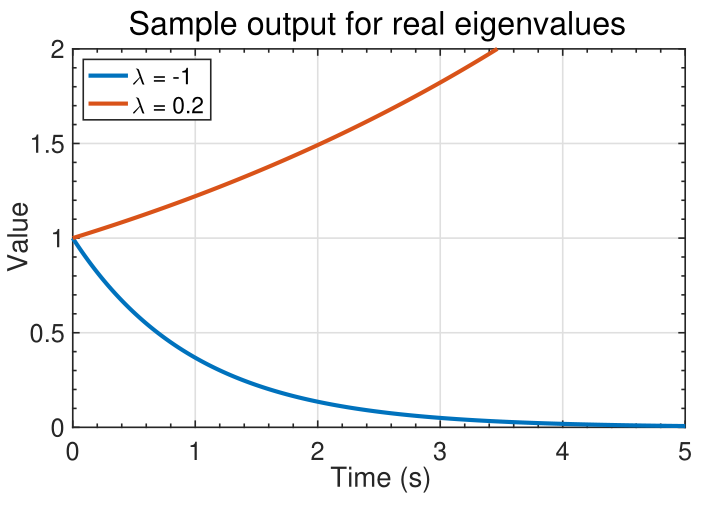

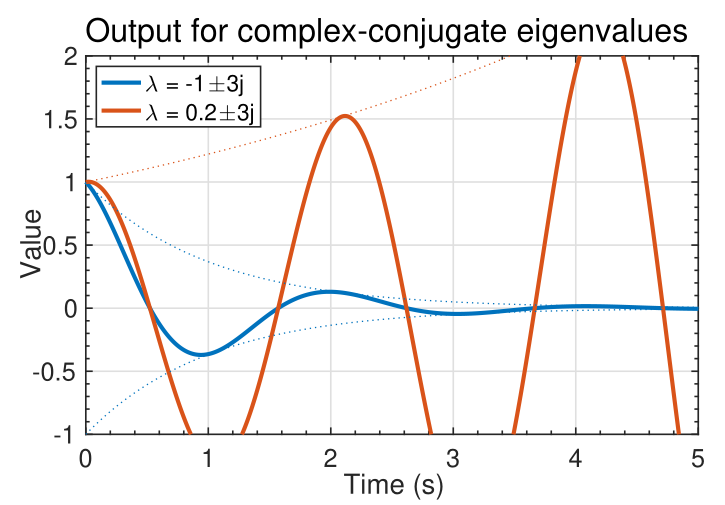

### Visualizing $e^{\lambda_i t}$

{#fig-kf-016 .column-margin group="slides" width="53mm"}

{#fig-kf-017 .column-margin group="slides" width="53mm"}

- The signal $e^{\lambda_it}$ can take on several different characteristics,

as shown in the figures below.

- If $\lambda_i$ is real, we observe a smooth monotonically growing or decaying signal.

- If $\lambda_i$ occur in complex-conjugate pairs, the output is of the pair is of the form $e^{\lambda_i t} \cos(\lambda_i t)$ : a cosine with an exponential

"envelope."

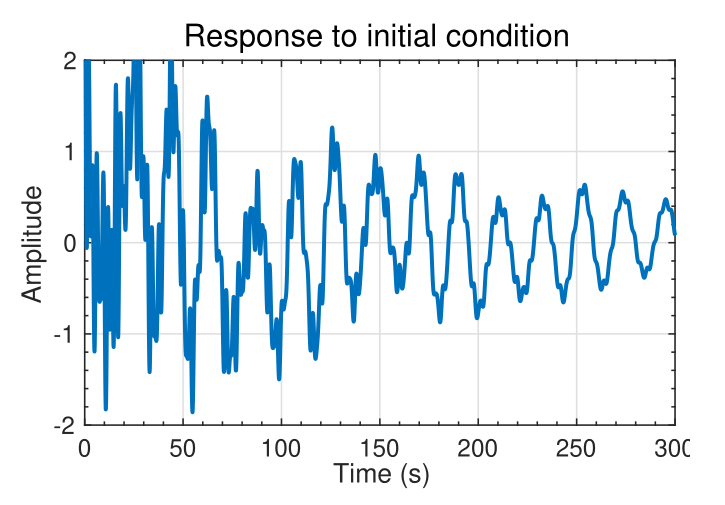

### An example to illustrate the complexity

{#fig-kf-018 .column-margin group="slides" width="53mm"}

- Consider again: $\sum _{i=1}^n e^{\lambda_i t} v_i (w^T_i x(0))$

- The state $x(t) = e^{At} x(0) =T e^{\Delta t} T^{-1} x(0) = \sum _{}^n e^{\lambda_i t} v_i w_i^T x(0)$ is a linear combination of the modes $e^{\lambda_i t} v_i$.

- The left eigenvectors $w_i^T$ decompose the initial state $x(0)$ into modal coordinates $w^T_i x(0)$.

- The modes $e^{\lambda_i t}$ propagate the modal components forward in time.

- The output $y(t) = C x(t)$ is

- Can be expressed as a linear combination of modes: $v_i e^{\lambda_i t}$.

- Left eigenvectors decompose $x(0)$ into modal coordinates $w^T_i x(0)$.

- $e^{\lambda_i t}$ propagates mode forward in time.

- EXAMPLE: Consider a specific system with $x(t) \in R^{16 \times 1}; \dot{y}(t) \in R (16-state, 1-output):Px(t) = Ax(t)$

$\dot{y}(t) = C x(t)$

- A lightly damped system, for which typical output to initial conditions is shown.

- Waveform is complicated. Appears random

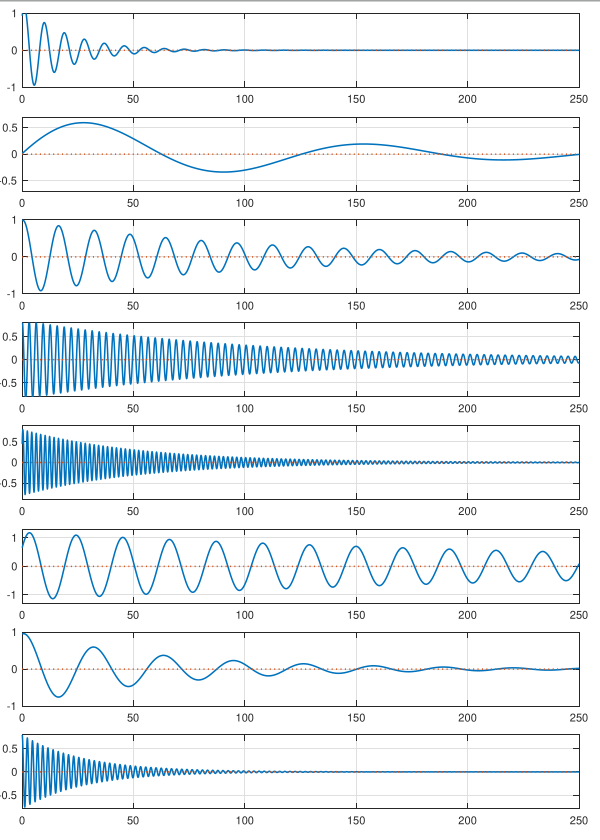

### Now we see the underlying simplicity

{#fig-kf-019 .column-margin group="slides" width="53mm"}

- However, the solution can be decomposed into much simpler modal components.

- The waveforms to the left show $e^{\lambda_i t}$ for complex-conjugate pairs $\lambda_i,\lambda_i^*$ summed together.

- All are decaying sinusoids with different

magnitudes, frequencies, and phase shifts.

- When summed together, the result appears complicated; but the individual components of the response are simple.

### Summary

- [The matrix exponential is key to understanding the response of a state-space model.]{.mark}

- As it's been a while since I saw the matrix exponent, it's worthwhile to refresh my intuition regarding what it looks like.^[Actually this is the first time I see the matrix exponential being used in an application]

- You have learned that we can usually write $e^{At} = T e^{\Delta t} T^{-1}$, where the columns of $T$ are the eigenvectors of $A$.

- All the dynamics are contained in $e^{\Delta t}$ , which turn out to be the standard scalar exponentials of (possibly complex) $\lambda_i t$ terms.

- So, the matrix exponential is a linear sum of exponentially-enveloped sinusoids.

- The frequencies of the sinusoids are the eigenvalues of $A$. The eigenvectors specify the relative weighting of each component to the overall output.

## Converting continuous-time state-space models to discrete-time {#sec-kf-1.2.5-continuous-to-discrete}

::: {.callout-tip collapse="true"}

### Questions {.unnumbered}

Here are question to consider as we learn how to convert continuous-time state-space models to discrete-time state-space models:

- What is the difference between continuous-time and discrete-time state-space models?

- which matrix are the same for continuous-time and discrete-time state-space models?

- for the rest how do we convert continuous-time state-space models to discrete-time state-space models?

- any shortcuts to remember?

:::

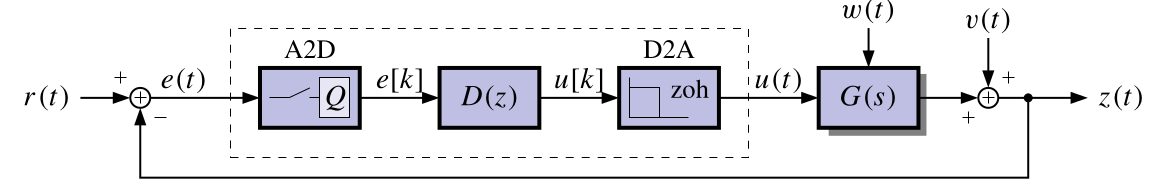

### Discrete-time state-space models

- Computer monitoring of real-time systems requires analog-to-digital (A2D) and digital-to-analog (D2A) conversion

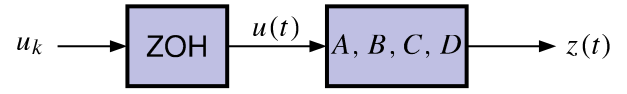

{#fig-kf-020 group="slides"}

- Linear discrete-time systems can also be represented in state-space form:

$$

\begin{aligned}

x_{t+1} &= A_dx_t + B_du_t + w_t && \text{state eqn.}\\

z_t &= C_dx_t + D_du_t + v_t && \text{observation eqn.}

\end{aligned}

$$ {#eq-kalman-filter-discrete-time-state-space-model}

- The subscript **d** emphasizes that, in general, the $A$, $B$, $C$ and $D$ matrices are different for the same discrete-time and continuous-time system.

- The **d** subscript will usually be dropped as it can be interpreted from the context.

### Time (dynamic) response of discrete-time models

The full solution, via induction from $x_{t+1} = A_d x_t + B_d u_t + w_t$, is:

$$

x_{t} = A_d^t x_0 +

\underbrace{\sum_{j=0}^{t-1} A_d^{t-j-1} B_d u_j }_{\text{convolution}}

$$ {#eq-kalman-filter-discrete-time-state-space-model-solution}

Since $z_t = C_d x_t + D_d u_t$, we also have:

$$

z_t=

\underbrace{C_d A_d^t x_0}_{\text{initial response}}+

\underbrace{\sum_{j=0}^{t-1} C_d A_d^{t-j-1} B_d u_j }_{\text{convolution}}

\underbrace{+ D_d u_t}_{\text{feed-through}}

$$ {#eq-kalman-filter-discrete-time-state-space-model-output}

- Comparing with the *continuous-time solution*,

- $e^{At}$ has been replaced by $A_d^t$ and

- integrals have been replaced by summations

Note: we wont cover the proof by induction and these are covered in Linear Systems Theory.

<!-- TODO: find an actual reference for this proof by induction. -->

### Converting plant dynamics to discrete time (1)

- Combine the dynamics of the zero-order hold and the plant.

{#fig-kf-021 group="slides"}

- Recall that the continuous-time state dynamics of the plant are:

$$

\dot{x} = Ax(t) + Bu(t)

$$

- Evaluate $x(t)$ at discrete times. Recall also the solution for $x(t)$:

$$

x(t) = \int_0^t e^{A(t-\tau)} B u(\tau) d\tau

$$

$$

x_{t+1}(t) = x((t+1)\Delta t) = \int_0^{(t+1)\Delta t} e^{A((t+1)\Delta t-\tau)} B u(\tau) d\tau

$$

### Converting plant dynamics to discrete time (2)

- We break up the integral into two pieces:

$$

\begin{aligned}

x_{k+1}(t) &= \int_0^{k\Delta t} e^{A((k+1)\Delta t-\tau)} B u(\tau) d\tau \\

&= \int_0^{k\Delta t} e^{A((k+1)\Delta t-\tau)} B u(\tau) d\tau + \int_{k\Delta t}^{(k+1)\Delta t} e^{A((k+1)\Delta t-\tau)} B u(\tau) d\tau \\

&= \int_0^{k\Delta t} e^{A\Delta t}e^{A((k)\Delta t-\tau)} B u(\tau) d\tau + \int_{k\Delta t}^{(k+1)\Delta t} e^{A((k+1)\Delta t-\tau)} B u(\tau) d\tau \\

&= e^{A\Delta t}x(k\Delta t) + \int_{k\Delta t}^{(k+1)\Delta t} e^{A((k+1)\Delta t-\tau)} B u(\tau) d\tau

\end{aligned}

$$

The first integral has become $A_d \times x(k \Delta t)$

The second will become $B_d \times u(k \Delta t)$ after a little more work

### Converting plant dynamics to discrete time (3)

- In the remaining integral, note that $u(\tau)$ is assumed to be constant from $k\Delta t$ to $(k+1)\Delta t$; and equal to $u(k\Delta t)$.

- We evaluate the integral via change of variables.

- Let $\sigma = (k+1)\Delta t - \tau$,

- $\tau = (k+1)\Delta t - \sigma$, and

- $d\tau = -d\sigma$.

$$

\begin{aligned}

x_{k+1} & = e^{A\Delta t}x(k\Delta t) + \int_{k\Delta t}^{(k+1)\Delta t} e^{A((k+1)\Delta t-\tau)} B u(\tau)\; d\tau \\

&= e^{A\Delta t}x(k\Delta t) + \left [\int_{(k+1)\Delta t}^{\Delta t} e^{A\sigma} B\; d\sigma \right ] u(k\Delta t)\\

&= \underbrace{ e^{A\Delta t}}_{A_d}\; x_k +

\underbrace{ \left [\int_{(k+1)\Delta t}^{\Delta t} e^{A\sigma} B\; d\sigma \right ] u_k }_{B_d}

\end{aligned}

$$

### Computing $A_d, C_d$, and $D_d$

- Summarizing to this point, we have a discrete-time state-space

representation from the continuous-time representation:

$$

x_{k+1} = A_d x_k + B_d u_k

$$

- where

- $A_d = e^{A\Delta t}$ and

- $B_d = \int_0^{\Delta t} e^{A(\Delta t-\sigma)} B d\sigma$.

- Similarly, we have: $z_k = C_d x_k + D_d u_k$.

- where

- $C_d = C$ and

- $D_d = D$.

- [There is no conversion for the $C$ and $D$ matrices,]{.mark}

- [The conversion for $A$ is straightforward via the matrix exponential]{.mark} $A_d = e^{A\Delta t}$.

- If Octave, we can type in: `Ad = expm(A*dT)`.

- Otherwise, we'll need to compute $e^{A\Delta t} = \mathcal{L}^{-1}\left[(sI - A)^{-1}\right]\Big|_{t = \Delta t}$

### Computing $B_d$

- Now we focus on computing $B_d$ . Recall that:

$$

\begin{aligned}

B_d &= \int_0^{\Delta_t} e^{A(\Delta_t-\sigma)} B d\sigma \\

&= \int_0^{\Delta_t} \left( I + A(\sigma) + \frac{1}{2!}A^2(\sigma)^2 + \cdots \right) B d\sigma \\

&= \int_0^{\Delta_t} \left( I + \Delta t + \frac{1}{2!}(\Delta t)^2 + \cdots \right) B d\sigma \\

&= \left( I + \Delta t + \frac{1}{2!}(\Delta t)^2 + A^2 \frac{1}{2!}(\Delta t)^3 + \cdots \right) B \\

&= A^{-1}(e^{A\Delta_t} - I)B \\

&= A^{-1}(A_d - I)B.

\end{aligned}

$$

- If $A$ is invertible, this method works nicely!

- Otherwise, we will need to perform the integral in the first line manually.

- Also, in Octave, `[Ad,Bd]=c2d(A,B,dT)`

### Summary

- Computer monitoring of physical systems requires A2D and D2A conversion.

- The system that is “seen” by the computer is a discrete-time system, and must be

represented by a discrete-time state-space model:

$$

\begin{aligned}

x_{t+1} &= A_dx_t + B_du_t + w_t && \text{state eqn.}\\

z_t &= C_dx_t + D_du_t + v_t && \text{observation eqn.}

\end{aligned}

$$

- Generally, the discrete $A$, $B$, $C$ and $D$ matrices differ from their continuous-time counterparts.

- We have learned to convert from the continuous $A$, $B$, $C$ and $D$ matrices to the discrete-time $A_d$, $B_d$, $C_d$ and $D_d$ matrices.

- Next we will see some examples of converting continuous-time to discrete-time and of simulating discrete-time state-space models.

## How do I simulate a discrete-time state-space model?

### Simulating discrete-time systems

- When simulating discrete-time state-space models, we have (at least) two options.

- There is a direct approach where we write the simulation code ourselves;

- Also, Octave has two functions in its `control` package that help us simulate these

models easily.

- As with continuous-time models, the first of these is `ss`, which creates a state-space object from $A$, $B$, $C$, and $D$ matrices.

- The second is `lsim`, which simulates a state-space object for given input

conditions and an optional initial state.

- This lesson will show examples of both approaches, applied to the *NCP*, *NCV*, and *CT* models that were introduced for continuous-time simulations in Lesson 1.2.2.

- But first, we need to convert these continuous-time state-space models to

equivalent discrete-time models.

## Converting the nearly-constant-position model

- We might be interested to simulate a discrete-time version of the continuous-time nearly-constant-position model.

- Recall that in continuous time, the 2-d NCP model is^[We are treating random input $w(t)$ in the same way we have discussed treating deterministic input $u(t)$ here. As you will learn next week, this assumption is somewhat incorrect, but is "okay for now."] :

$$

\begin{aligned}

\dot x(t) &= 0_{2 \times 2} x(t) + I_2 w(t) \\

z(t) &= x(t) + v(t)

\end{aligned}

$$

In discrete time, we must then have (where ĩt is the sample period):

$$

\begin{aligned}

x[k+1] &= e^{0\Delta t} x[k] + \left( \int_0^{\Delta t} e^{0\sigma)} d\sigma \right) I w[k] \\

z[k] &= x[k] + v[k]

\end{aligned}

$$

### Scaled discrete-time NCP model

TODO

### Time (dynamic) response using toolbox

TODO

### Time (dynamic) response using direct method

TODO

### Converting the nearly-constant-velocity model

TODO

### Computing NCV $A_d$

TODO

### Computing NCV $B_d$

TODO

### Rescaling the discrete-time NCV model

TODO

### Time (dynamic) response using toolbox

TODO

### Time (dynamic) response using direct method

TODO

### Converting the coordinated-turn model

TODO

### Time (dynamic) response using toolbox

TODO

### Time (dynamic) response using direct method

TODO

### Summary

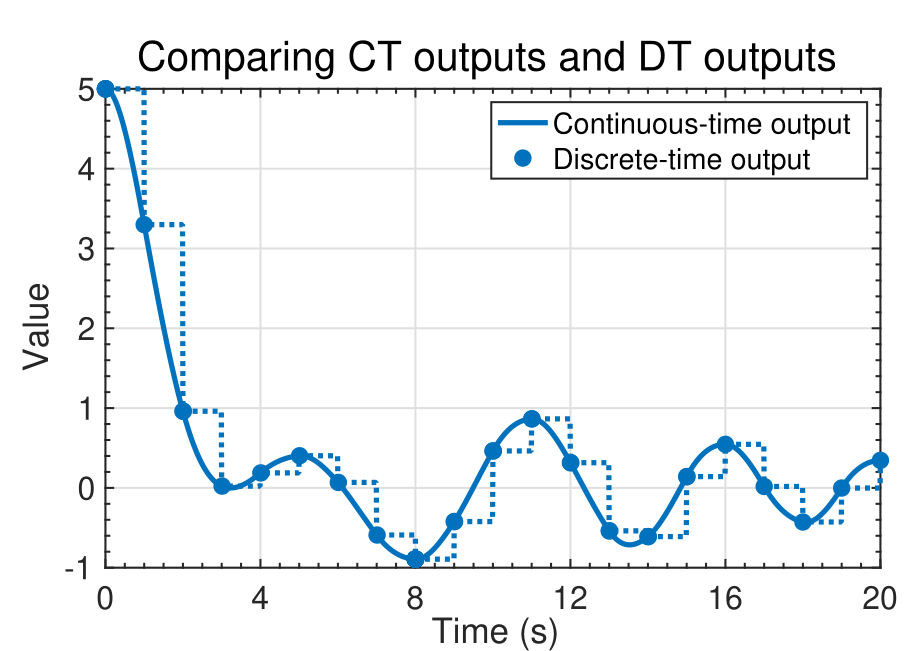

{#fig-kf-022 .column-margin group="slides" width="53mm"}

{#fig-kf-023 .column-margin group="slides" width="53mm"}

- In this lesson, you learned two different ways to simulate discrete-time models in Octave: toolbox and direct.

- Both methods produce identical results; which one you use is mainly a matter of preference.

- You also learned how to convert NCP, NCV, and CT continuous-time models into discrete-time models.

- Note that the continuous-time models and discrete-time models produce identical computations at the sample points, but different values in-between sample points (technically, the discrete-time state and output are not defined in-between sample points).

## Is it even possible for a KF to estimate this model’s state? {#sec-kf-1.2.7-kf-estimation}

### Observability: Can I estimate an initial state?

TODO

### Finding an equation describing output derivatives

TODO

### The observability matrix

TODO

### A brute-force continuous-time observer

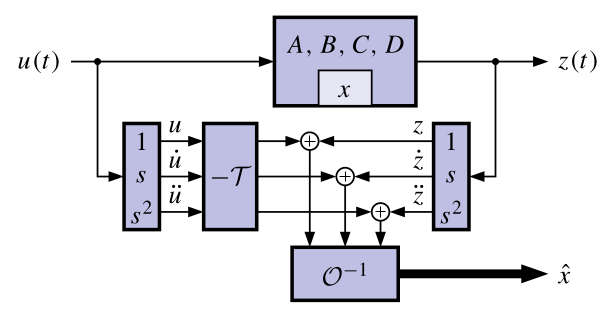

{#fig-kf-024 group="slides"}

- One possible approach to determine the system state, directly

from the equations, uses differentiators.

- A big problem is that differentiators amplify noise, corrupting the state estimate.

- The KF is a more practical observer that doesn’t use differentiators.

- Regardless of the approach, it turns out that the system must be observable to be able to determine its initial state.

- CONCLUSION: If $\mathcal{O}$ is nonsingular, we can determine/estimate the initial state $x(0)$ of the system using only $u(t)$ and $z(t)$ (and so we can estimate $x(t)$ for all $t \geq 0$).

- ADVANCED TOPIC: If some states are unobservable but stable, an observer will still converge to the true state, although $x(0)$ may not be uniquely determined.

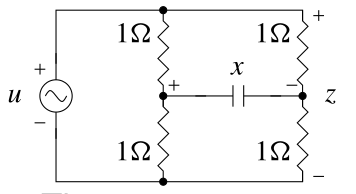

### Examples of unobservable models

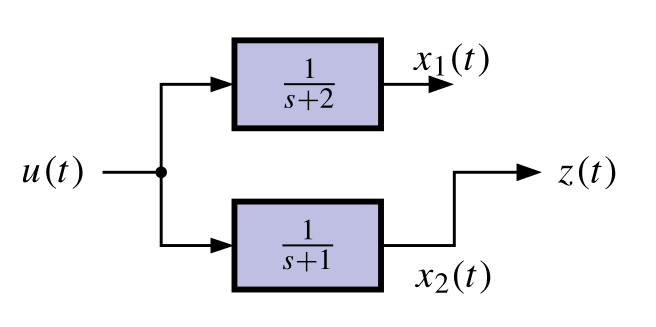

{#fig-kf-025 .column-margin group="slides" width="53mm"}

{#fig-kf-26 .column-margin group="slides" width="53mm"}

{#fig-kf-027 .column-margin group="slides" width="53mm"}

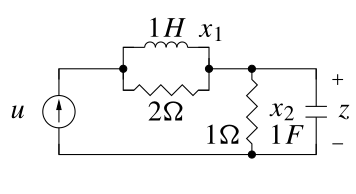

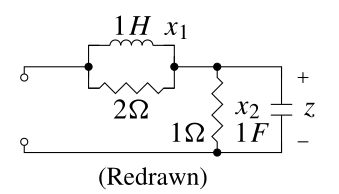

- Consider the following two unobservable circuits:

TODO

### Controllability: Can I get there from here?

- Controllability is a dual idea to observability. We won’t go into

depth here since it is not as important for our topic of study.

- Controllability asks the question, “Can I move from any initial state to any desired state via suitable selection of the control input u.t/?”

- The answer boils down to a condition on a matrix called the controllability matrix

$$

C = [B\; AB\; \cdots\; A^{N-1}B]

$$

- TEST: If C is nonsingular, then the system is controllable.

## Diagonal systems, controllability and observability

{#fig-kf-028 .column-margin group="slides" width="53mm"}

TODO

### Why is a system unobservable? Uncontrollable?

{#fig-kf-030 .column-margin group="slides" width="53mm"}

{#fig-kf-031 .column-margin group="slides" width="53mm"}

TODO

### Equations describing discrete-time outputs

TODO

### Discrete-time observability matrix

TODO

### A brute-force discrete-time observer

TODO

### Discrete-time controllability

TODO

### Summary

- Is it even possible to estimate the state of our model?

- The (continuous- or discrete-time) observability matrix $\mathcal{O}$ tells us whether the initial state can be found using measurements of input and output.

- If $\mathcal{O}$ is invertible, the system is observable and we can find $x(0)$ or $x_0$.

- If $\mathcal{O}$ is not invertible, we cannot find $x(0)$ or $x_0$ uniquely because either a state is not connected to the output or multiple states have the same eigenvalues.

- If we can find the initial state, we can compute it at any other time. Otherwise, we cannot compute the state uniquely at any other time.

- Therefore, we will say/assume that it is necessary for our models be observable for the KF to be able to estimate the state. ^[Advanced topic: A system is detectable if all states that cannot be observed are stable. We can apply KF to a detectable system (as the unobservable states decay toward a known trajectory in the absence of process noise) but uncertainties of unobservable states will generally become large.]